Scenario

Simulating load on an e-commerce API where multiple users access data every few seconds. A total of 100 requests are executed using concurrent virtual users to measure response time and system behavioour.

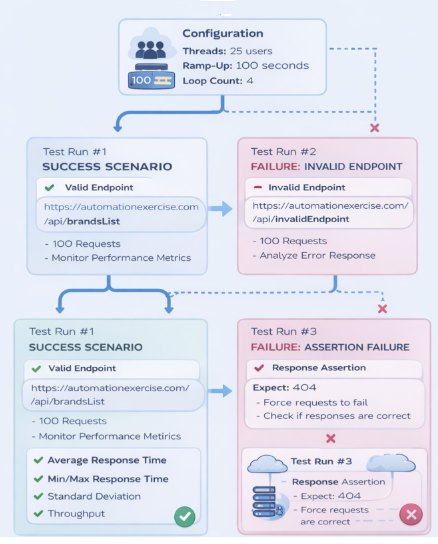

Configuration

- Endpoint: https://automationexercise.com/api/brandsList

- Thread Group:

- Number of Threads: 25 users

- Ramp-Up Period: 100 seconds

- Loop Count: 4

👉 Total Requests:

25 × 4 = 100 requests

- HTTP Request:

- Method:

GET - Protocol:

https - Server Name: automationexercise.com

- Path: api/brandsList

- Method:

API Performance Testing Workflow:

Testing Execution with Jmeter:

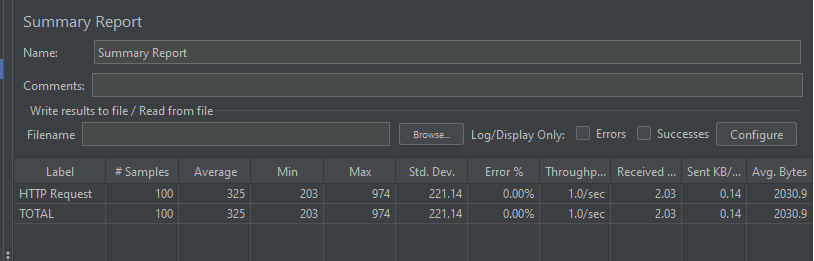

Test Run #1 — Success Scenario

✔️What happens:

- All requests sent successfully

- API responds with valid data.

📊 What to analyze:

- Average Response Time → overall performance

- Min / Max → consistency

- Standard Deviation → stability under load

- Throughput → requests per second

Interpretation :

- Acceptable average → system performs well

- Low deviation → stable responses

- Low throughput → light load simulation

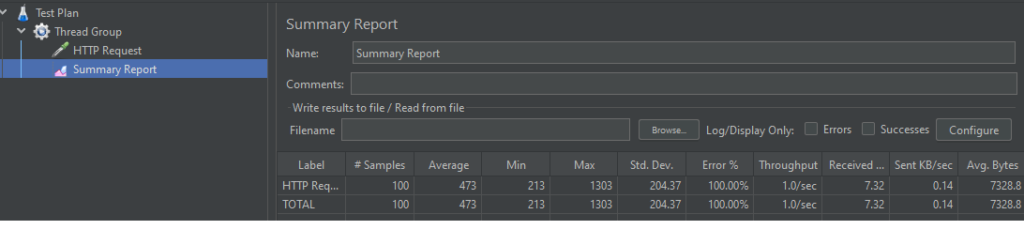

❌ Test Run #2 — Failure (Invalid Endpoint)

👉 Change endpoint to:

/api/invalidEndpoint

✔️ What this tests:

- How system handles incorrect requests

- Error response behaviour

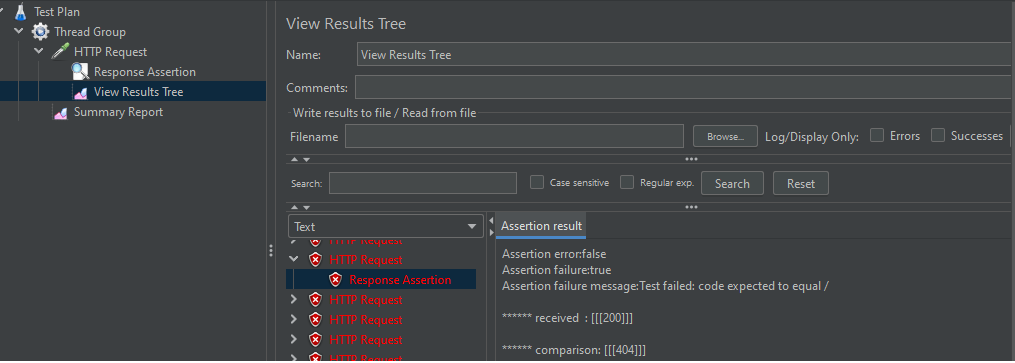

❌ Test Run #3 — Assertion Failure

Add Response Assertion:

- Expect:

200 - Force failure by expecting wrong value (e.g.

404)

✔️ What this tests:

- Validation logic

- Detection of incorrect responses

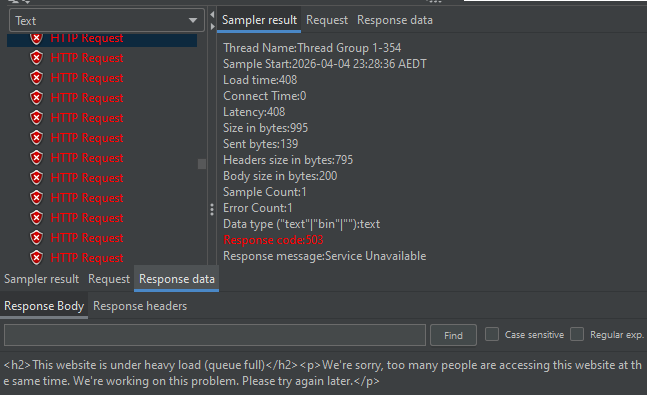

❌Test Run #4 —Overload Method

Changing number of thread as 500 and ramp-up period as 0.01 seconds, and delay as 5000 ms

✔️ What this tests:

- System behaviour under extreme load

- Stability when handling concurrent users

- How the API responds when overloaded (e.g., 503 errors)

What This Demonstrates:

- Performance testing under load

- System behavior with multiple users

- Error handling (invalid requests)

- Validation of expected responses

- QA mindset beyond functional testing

“Performance testing is not just about speed. it’s about understanding how the system behaves when real users hit it.”